Risk-Adjusted Intelligence

Software engineers have maintained a self-conception as highly paid, skilled tradespeople. This framework is falling apart now that the barriers to entry are disappearing. The craft is now carried out by AI — the SWE is just the capital allocator, deciding where there is ROI, liquidity risk, and room for diversification. The profession is completely different from just a few years ago. As all other aspects of software engineering now disappear into the background, its economic logic comes to the foreground.

The central challenge of capital allocation is decision-making under uncertainty. This has traditionally been outside the purview of software engineering, with engineers focusing on implementation conditioned on certain assumptions about the future. The challenge of planning around uncertainty was left to management or investors to whom a specific project or company, respectively, is just one bet in a portfolio. Now that everyone can build software, SWEs cannot merely build to a spec — they must be able to make guarantees about system performance that embrace and account for uncertainty.

Because the future is uncertain all assets carry risk — software is no exception. As the cost of software production goes to zero many mistakenly believe that this makes building in-house risk free, but this is based on the simplistic idea that one can only lose what one has paid. Upfront cost is but one consideration in the expected value of adopting software. In fact, there is a vast distribution of outcomes that can follow, some more likely than others, and all of different payoffs. Software businesses will underwrite the risk for you, but for that they will collect a premium. One might go further and suggest there will be no software needs at all — that AGI will simply do the right thing at inference time. Here, of course, it comes back to risk. Software is frozen intelligence that can be derisked offline. Abandoning conventional software altogether would introduce too much operational risk.

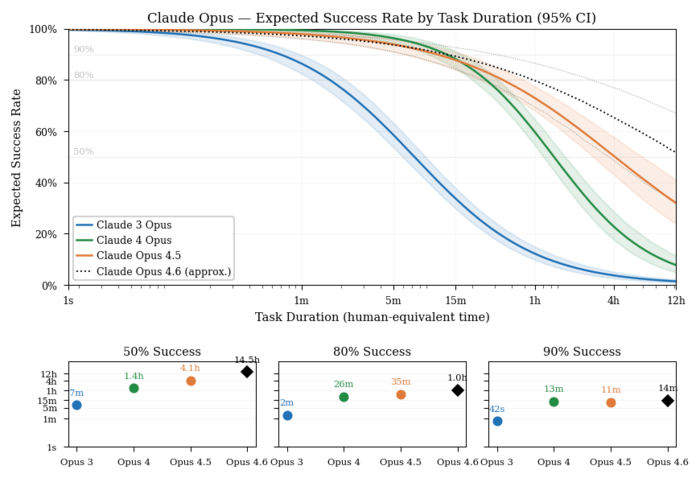

In the chart at the top, you’ll notice that the time horizon is quickly extending for SOTA models; however, the progress is most rapid at the 50% success rate. At 80% and 90%, the trend is much slower or even non-existent. So it’s clear to see that guaranteed outcomes will lag frontier capabilities. This is the jagged frontier that software can smooth over. There is risk attached to developing bespoke software, but there’s also significant risk in not developing the software that can bolster AI agents.

In a world of strong AI, the role of conventional software is to narrow the distribution of possible outcomes so as to avoid ruinous tail risks. Software firms of the future will be in the business of combining the two to achieve high efficacy on economically valuable tasks with minimal risk. Because AGI cannot bring an end to uncertainty, it does not foreclose on opportunity — for SWEs or otherwise. It would take something much scarier to achieve that.